“Tokens are the currency, the alphabet, and the bottleneck of modern AI.”

They’re not a perfect abstraction of language, but a clever engineering compromise that lets models scale while handling the messiness of real text.

Tokens Explained

We’re gradually becoming accustomed to a world where AI is becoming a natural part of our daily lives. As this shift continues, our understanding of how AI evolves and how useful it can continues to grow.

Yet one concept still causes confusion for many: tokens.

At a basic level, tokens are often described as the unit we “pay” with when using AI, which is largely true. However, that is only part of the picture. Tokens are the fundamental unit that determines how AI models read, remember, reason, and ultimately how usage is billed.

Understanding them properly allows you to write better prompts, estimate costs more accurately, debug unexpected behaviours, and get far more value from modern AI models.

Why not make each word a token?

Large language models do not process raw text as characters or full words. They operate entirely on numbers, specifically vectors that represent discrete units we call ‘tokens’.

The process begins with a tokenizer, which converts your input text into a sequence of token IDs from a fixed vocabulary, typically ranging from 30,000 to over 200,000 entries. These IDs are then transformed into high-dimensional vectors and processed by the model using transformer architecture.

Tokens exist between characters and words:

• Character-level → too many tokens, leading to long and inefficient sequences

• Word-level → vocabulary grows uncontrollably due to rare words, misspellings, and variations like “running”, “runner”, “ran”, as well as multilingual content

• Subword-level → the approach used by modern LLMs, balancing vocabulary size with flexibility

As a general rule and a good approximation, in English:

1 token ≈ 4 characters ≈ 0.75 words

So 1,000 tokens is roughly 750 words, although this varies depending on language, punctuation, code, and emojis.

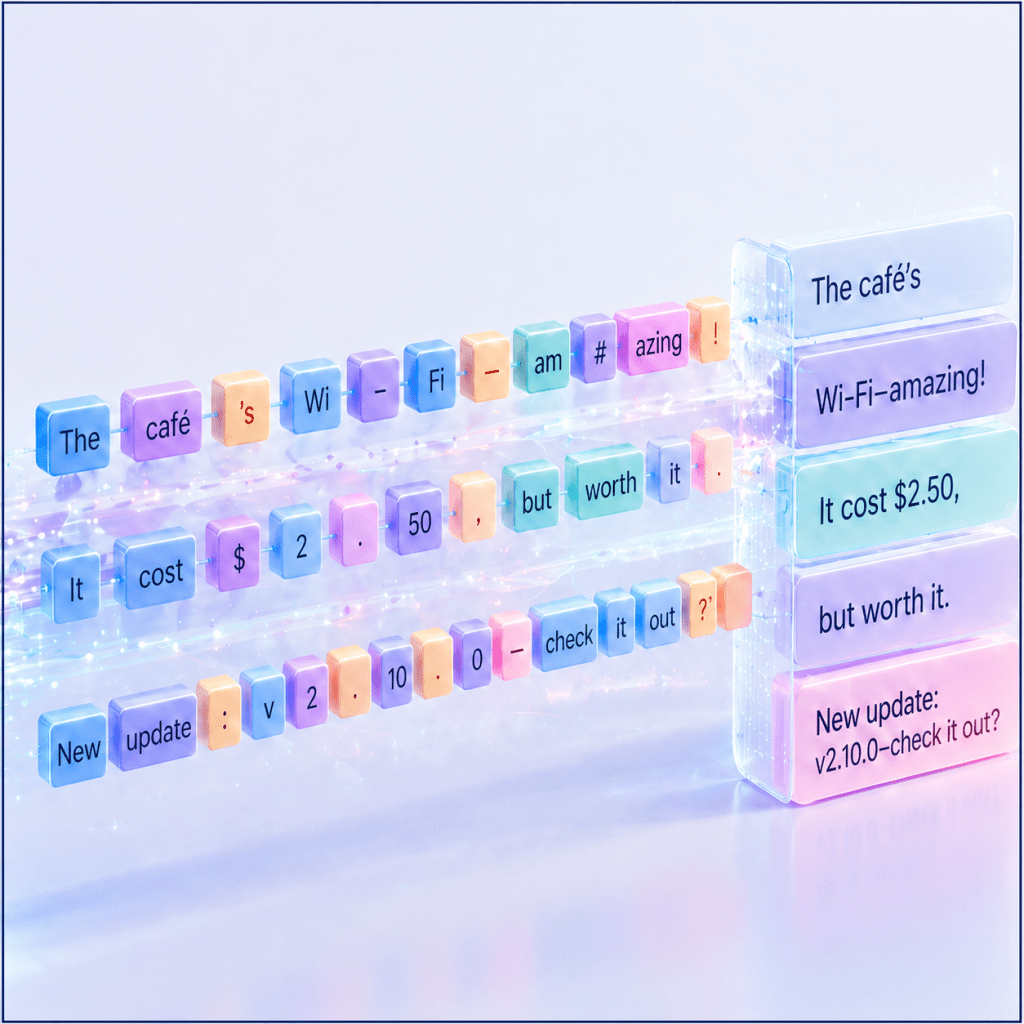

Subword Tokenisation in Practice

Modern tokenizers rely on algorithms such as Byte-Pair Encoding (BPE), WordPiece, Unigram, or SentencePiece. Some approaches, like SentencePiece, are language-agnostic and treat whitespace as part of the tokenization process, often represented by a special character such as ▁.

Here is a simplified example using a GPT-style BPE tokenizer (such as cl100k or similar):

Input:

“Tokens are the currency of AI.”

Possible tokenization (approximate and model-dependent):

• [“Tokens”, ” are”, ” the”, ” currency”, ” of”, ” AI”, “.”]

Now consider less common or more complex cases:

• “unhappiness” → [“un”, “happiness”] or [“un”, “happi”, “ness”]

• “ChatGPT” → may remain whole if common, or split into [“Chat”, “G”, “PT”]

• Code: for i in range(10): → common keywords often stay intact, while variables and numbers are split efficiently

• Emojis or non-English text → often broken into smaller byte-level pieces, as many modern tokenizers operate at a byte level

A few details that often catch people out:

• Leading spaces are usually part of the token, so ” hello” and “hello” may be treated differently

• Numbers, URLs, emails, and code can be split in unexpected ways

• Rare names or new slang are often broken into multiple subword tokens, which can slightly increase token usage and sometimes affect performance

The key insight is that the tokenizer is shaped by the data the model it was trained on. Frequent patterns become single tokens, making them efficient. Rare or unfamiliar patterns are split into smaller pieces, which remain understandable but require more tokens to process.

Token Count Matters More Than You Think

Tokens quietly sit at the center of everything a model does, shaping not only how it reads and responds, but also how far it can “see,” how fast it works, and how much it costs to use.

Every model operates within a fixed context window, which is effectively its working memory. Modern systems have expanded this dramatically, with many production models comfortably handling well over one hundred thousand tokens, and some flagship variants stretching into the millions. This makes it possible to load entire books, large codebases, or long conversational histories into a single interaction. But this capacity is not infinite. Once you exceed it, earlier information is simply pushed out, no longer visible to the model.

At the same time, tokens are the unit of cost. Every input you send and every word the model generates is counted and billed. Input is typically cheaper, while output costs more, so longer prompts and especially verbose responses can add up quickly. Understanding token usage is therefore not just technical knowledge, but a practical way to control spend and choose the right model for the task.

There is also a direct relationship between tokens and performance. More tokens mean more computation. Even with modern optimisations, longer contexts require more processing and can introduce subtle effects, such as information in the middle of a prompt receiving less attention. This is why simply adding more content does not always improve results. Placement and clarity often matter more than sheer volume.

This connects closely to reasoning quality. Some models benefit from more explicit, step-by-step instructions, which naturally consume additional tokens but can improve accuracy. At the same time, efficient prompts, those that are clear, structured, and information-dense, often outperform longer, more verbose ones. It is not about using more tokens, but about using them well.

A lot of confusion comes from how tokens are perceived. They are often mistaken for words, but the relationship is only approximate. A short, common word might be a single token, while a longer or more technical term could be split into several. The model itself does not “see” language in the way we do. It never reads letters or understands words directly, but instead processes sequences of token IDs and their learned representations. This is why it can sometimes fail at tasks that seem trivial to humans, such as counting letters in a word.

It is also tempting to assume that larger context windows automatically lead to better results. In reality, longer contexts introduce their own challenges. Attention becomes more diffuse, and retrieving the right piece of information at the right time becomes harder. Similarly, not all tokenizers behave the same way. Different model families split text differently, which can affect both cost and performance. This becomes especially important in tasks like retrieval-augmented generation, where precise control over token boundaries matters.

A useful mental model is that tokens represent capacity, not intelligence. A larger context window gives the model more room to work with, but it does not make it inherently smarter. In many cases, a concise, well-structured prompt will outperform a longer, less focused one.

In practice, a rough estimate helps. In English, one token is often about four characters, or roughly three quarters of a word, though this varies with punctuation, code, and other languages. For more accuracy, dedicated tools such as OpenAI’s tiktoken or the Hugging Face tokenizer libraries can provide exact counts. Many modern interfaces now expose this directly, making it easier to iterate and refine.

Ultimately, working effectively with tokens is about awareness and experimentation. Removing unnecessary fluff, structuring inputs clearly, placing key information deliberately, and testing how different inputs are tokenized can make a noticeable difference. This becomes even more important with multilingual data, code, or long documents, where token counts can increase quickly and naive chunking strategies can waste valuable context.

The more you work with tokens, the more intuitive they become. And once they do, you start to see them not just as a billing mechanism, but as the underlying currency of how models think.

Final Reflection

I will close with the opening quote:

“Tokens are the currency, the alphabet, and the constraint of modern AI.“

They are not a perfect representation of language, but a highly effective engineering compromise that allows models to scale while still handling the complexity and messiness of real-world text.

Once you internalise that large language models predict the next token rather than “think” in words, everything starts to shift. You begin to design prompts with intention, use tokens more efficiently, and measure interactions with greater precision. That is the moment you move from casual use to true fluency.

As context windows expand into the millions, the real advantage no longer comes from access alone, but from understanding. It comes from knowing what the model is actually “seeing,” how information is structured, and where attention is likely to land.

Mastering tokens leads to tighter prompts, clearer reasoning, better cost control, and more consistent results. And in a landscape where models continue to evolve rapidly, that understanding becomes a lasting edge, independent of whichever model comes next.

Leave a comment